TerraMind

Large-scale Generative Multimodality for Earth Observation

TerraMind: Large-scale Generative Multimodality for Earth Observation

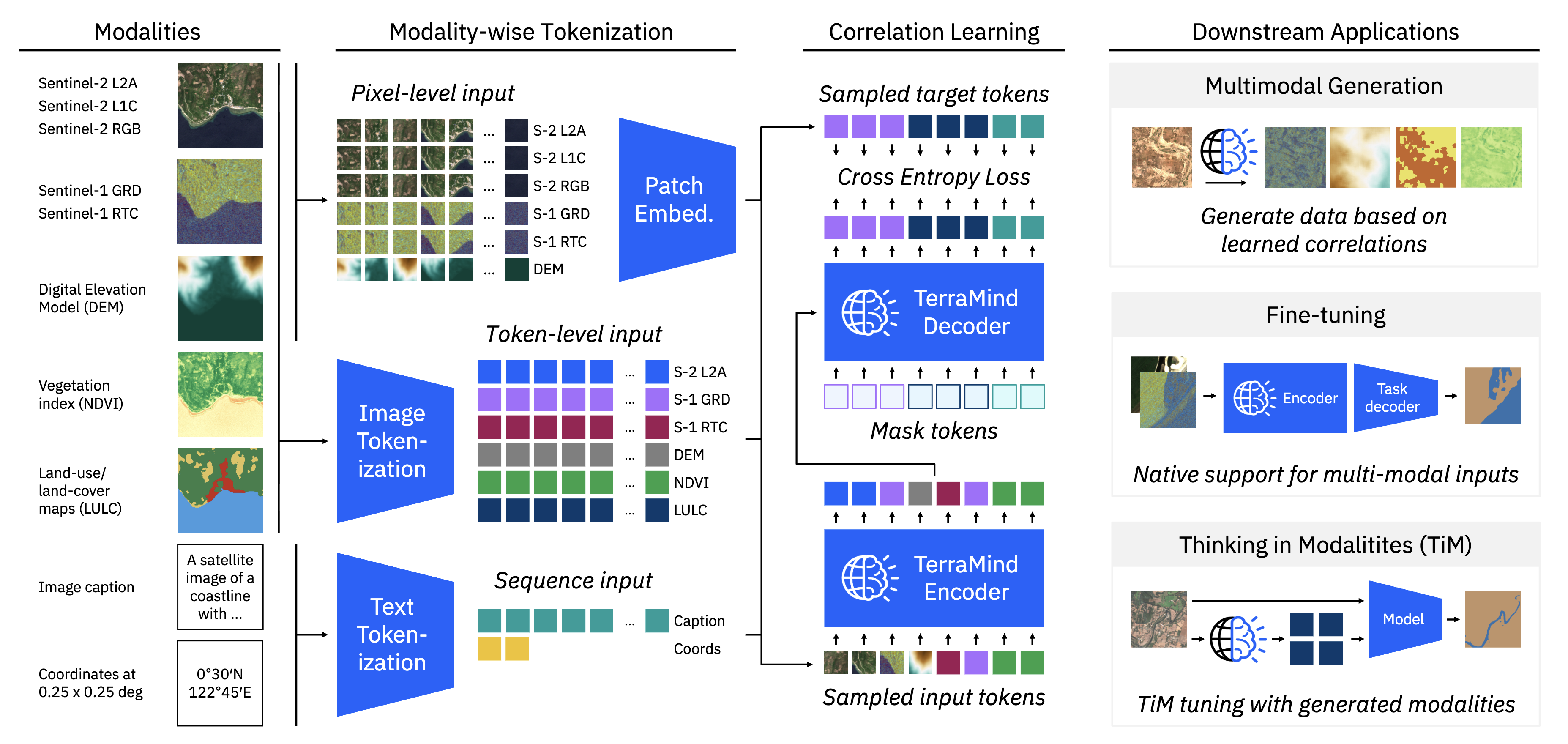

TerraMind is a groundbreaking multimodal foundation model designed specifically for Earth observation tasks. Published at ICCV 2025, this project represents a significant advancement in combining vision and language understanding for geospatial applications.

Key Features

- Multimodal Architecture: Seamlessly integrates visual and textual information for comprehensive Earth observation analysis

- Large-scale Pretraining: Trained on massive datasets of satellite imagery and associated metadata

- Generative Capabilities: Can generate descriptions, answer questions, and provide insights about Earth observation data

- Open Source: Full implementation available on GitHub with pre-trained models on HuggingFace

Impact

- 60+ Citations within months of publication

- Featured at ICCV 2025, one of the top computer vision conferences

- Collaboration with NASA and ESA for planetary observation applications

- Used in climate impact assessment and natural hazard detection