Phaedra

Learning high-fidelity discrete tokenization for the physical sciences

Phaedra: Learning High-Fidelity Discrete Tokenization for the Physical Sciences

Phaedra introduces a groundbreaking approach to discrete tokenization specifically designed for physical sciences data, including Earth observation, weather modeling, and climate assessment. This work addresses a fundamental challenge in applying modern deep learning to physical sciences: how to transform high-dimensional data into sequences that can be efficiently learned, generated, and generalized.

The Challenge

Tokens are discrete representations that allow modern deep learning to scale by transforming high-dimensional data into sequences. However, existing tokenizers designed for realistic visual perception of images struggle to capture both fine details and precise magnitudes - properties that are crucial for physical sciences applications.

Key Innovation

Inspired by classical shape-gain quantization and basis function decomposition, Phaedra proposes a novel tokenization approach that:

- Captures Fine Details: Preserves the intricate spatial patterns and structures in physical data

- Maintains Precise Magnitudes: Accurately represents the quantitative values essential for scientific analysis

- Dual-Stream Architecture: Separates amplitude (magnitude) and image (shape) information for optimal representation

- Enables High-Fidelity Reconstruction: Achieves superior reconstruction quality across multiple metrics

- Generalizes Across Domains: Demonstrates out-of-distribution generalization to unseen PDEs, unknown PDEs, and real-world data

Technical Approach

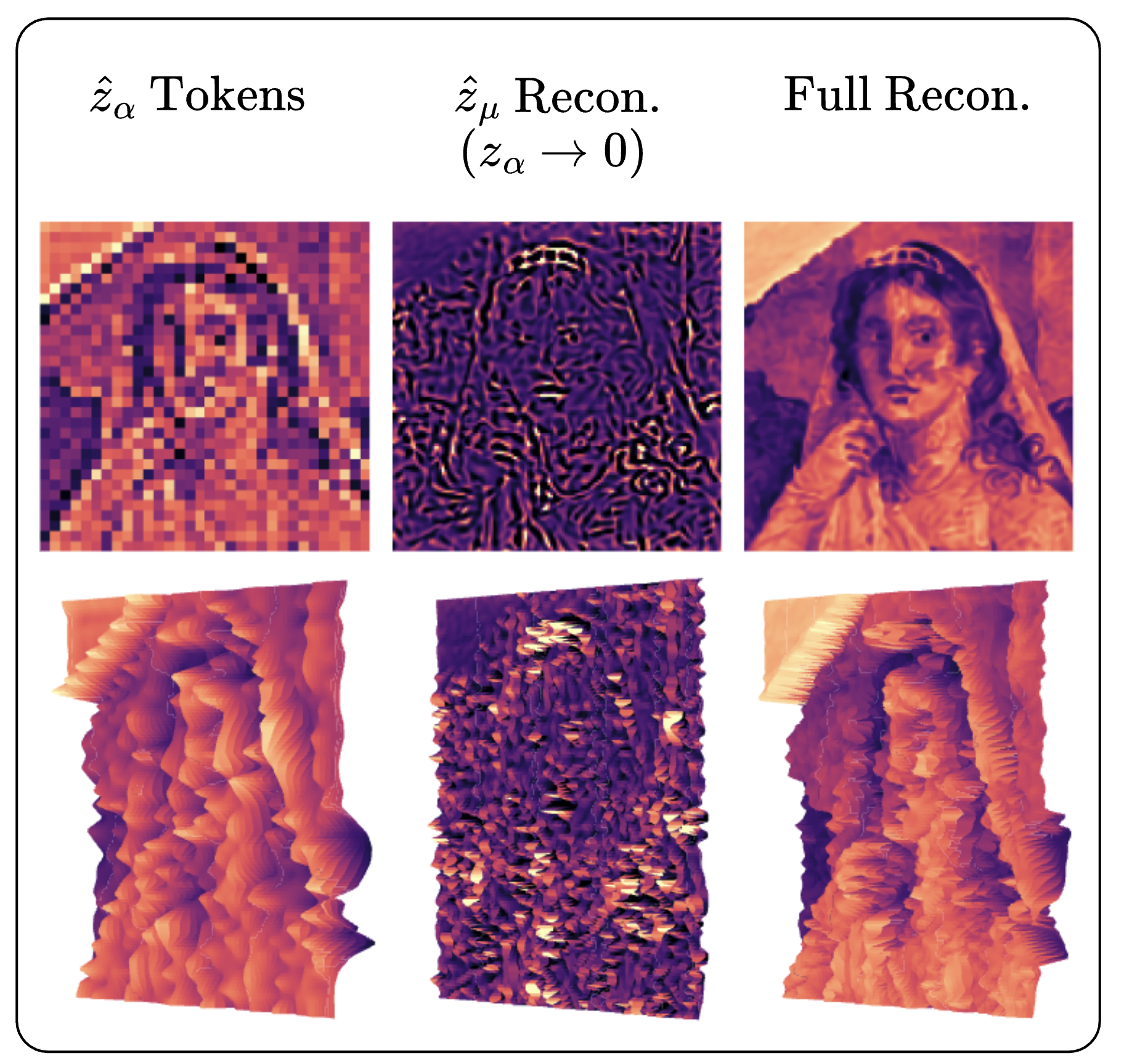

Phaedra’s tokenization pipeline works in two streams:

- Amplitude Tokens: Encode the precise magnitudes and global coherent structures

- Image Tokens: Capture fine details and local high-frequency features

This decomposition, inspired by classical signal processing, allows the model to:

- Focus on fine details while maintaining precise amplitudes

- Learn global and local features separately

- Achieve better reconstruction with fewer tokens

- Generalize to new physical systems

Resources

Project Website

camlab-ethz.github.io/Phaedra

Paper (arXiv)

arxiv.org/pdf/2602.03915v1

Code (GitHub)

github.com/camlab-ethz/Phaedra

Impact

This work bridges the gap between modern deep learning tokenization approaches and the rigorous requirements of physical sciences, enabling more accurate and reliable AI systems for scientific applications.