Quantizing Space and Time

Fusing time series and images for Earth observation

Quantizing Space and Time: Fusing Time Series and Images for Earth Observation

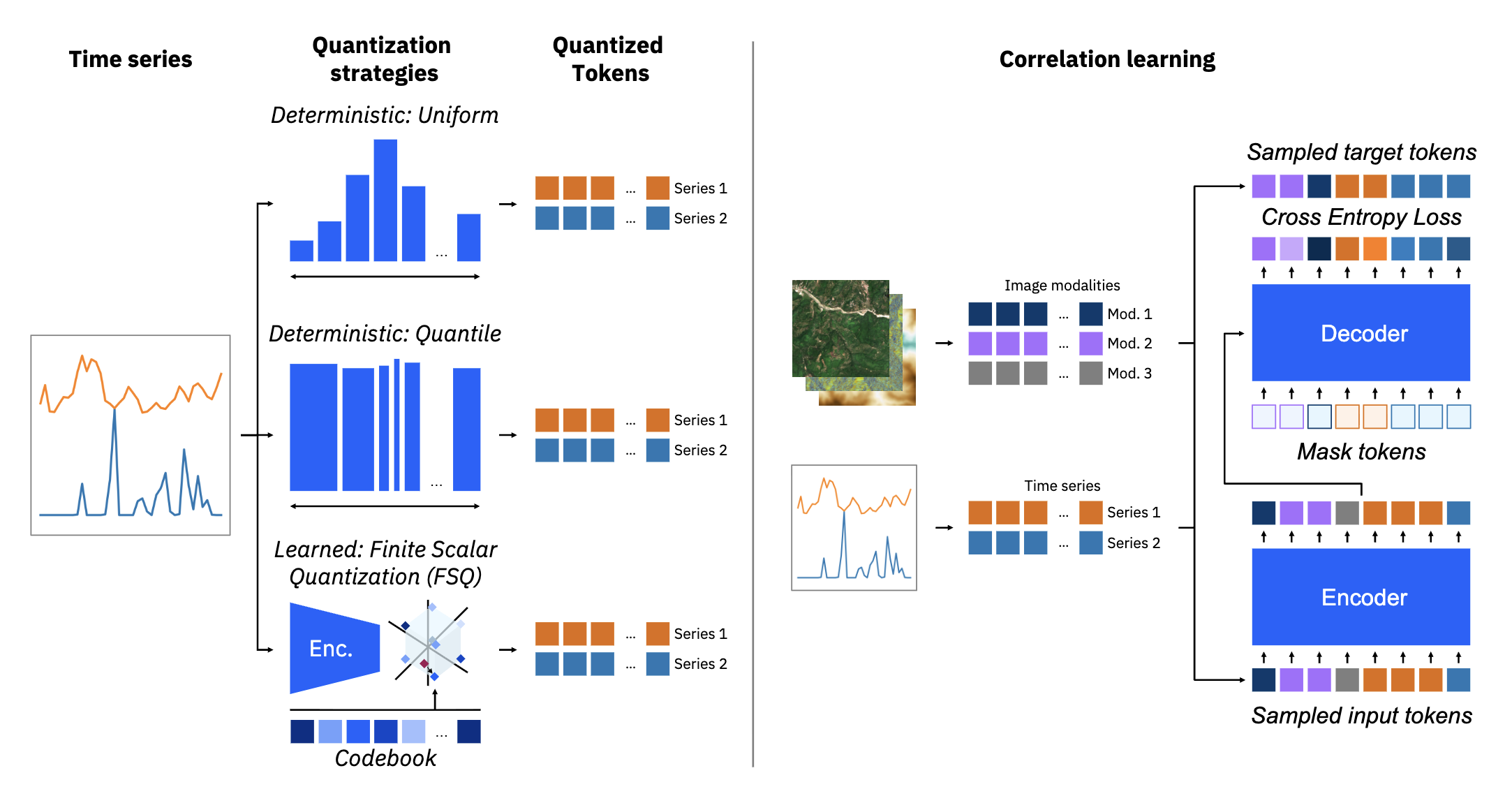

This work introduces a task-agnostic framework for multimodal fusion of time series and images, enabling cross-modal generation and robust downstream performance for Earth observation applications.

Key Innovation

The framework proposes a unified representation space for spatiotemporal data through:

- Discrete Quantization: Transforms continuous time series into discrete tokens

- Masked Correlation Learning: Aligns discrete image and time series tokens

- Task-Agnostic Design: Enables both cross-modal generation and robust downstream performance

- Scalable Architecture: Handles variable-length time series and different spatial resolutions